About User Experience and Elektrobit’s UX solutions

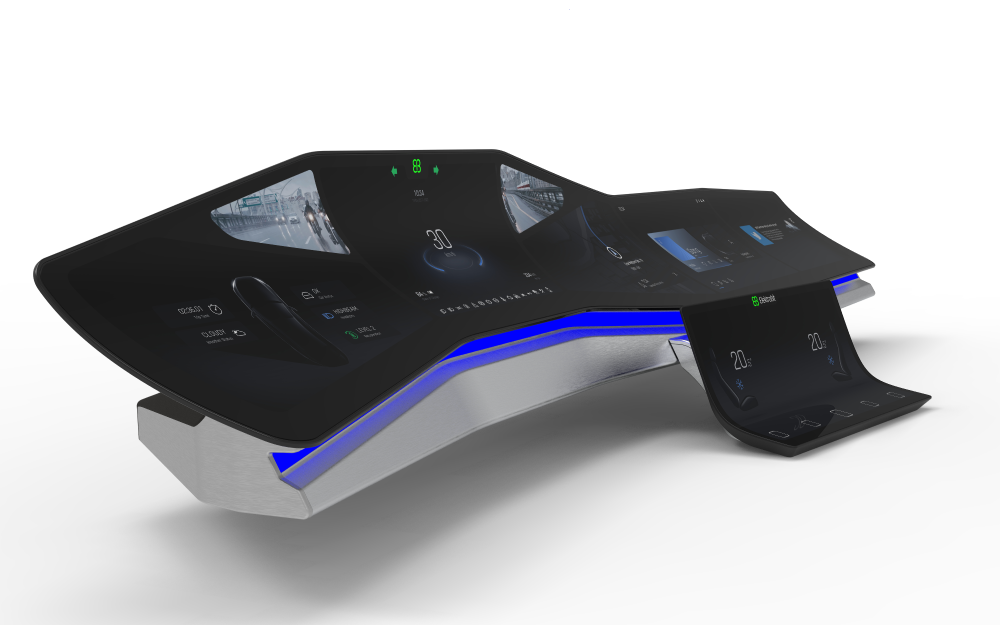

In-vehicle HMI systems are important to consumers making purchasing decisions and to manufacturers wanting to offer great usability and reliability while also taking advantage of branding opportunities.

Elektrobit offers a complete range of services and integrated solutions customized to meet your particular goals and requirements.

Here are three facts you should know about our approach to HMI projects:

Our development teams use agile development methods and a continuous improvement process.

Our close contact with car makers and Tier 1 suppliers guarantees that we are aware of upcoming HMI trends and are able to address them at an early stage.

Our solutions include licensing of our best-in-class HMI products.

Expertise in HMI development

With more than 15 years in creating HMIs, our consultants have acquired top-notch skills. Our expertise spans software development, software architecture, usability, and development processes for best-in-class HMIs.

A reusable HMI approach

We want you to get the most from your investment, which is why we believe in implementing a continuous HMI development strategy that leads to maintainable HMIs and manages variants for different countries and brands. Elektrobit can help you implement a long-term HMI strategy that strengthens your brand value and reduces costs.

A team exactly the size you need

Whether it’s a single EB engineer providing consulting on a specific aspect of HMI, a small team for porting and usability testing, or a large team to manage the complete HMI development, Elektrobit can assist you.

Boost your HMI project with our comprehensive UX services

Engineering services

UX engineering services

The know-how, tools, and teams to bring your next generation of sleek, intuitive user interfaces on the road, including:

Amazon Alexa integration

Build a voice-first in-car experience powered by Amazon Alexa.

Elektrobit’s services for Android Automotive

Deliver richer in-car user experiences with Elektrobit's services for Android Automotive.

Further information on Elektrobit’s UX and HMI solutions

- Further information

- Training